Employment Workplace Discrimination With Ai In Cook

Description

Form popularity

FAQ

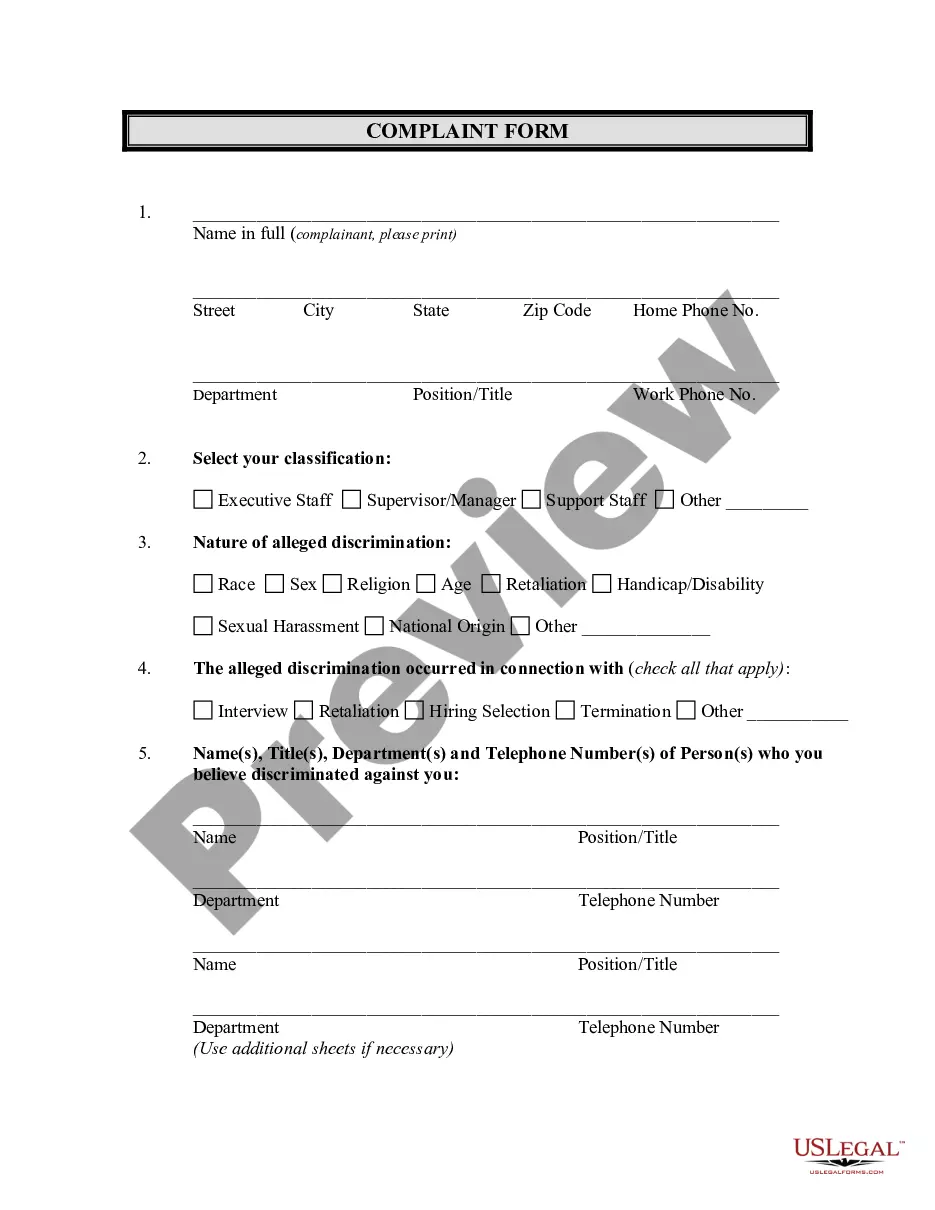

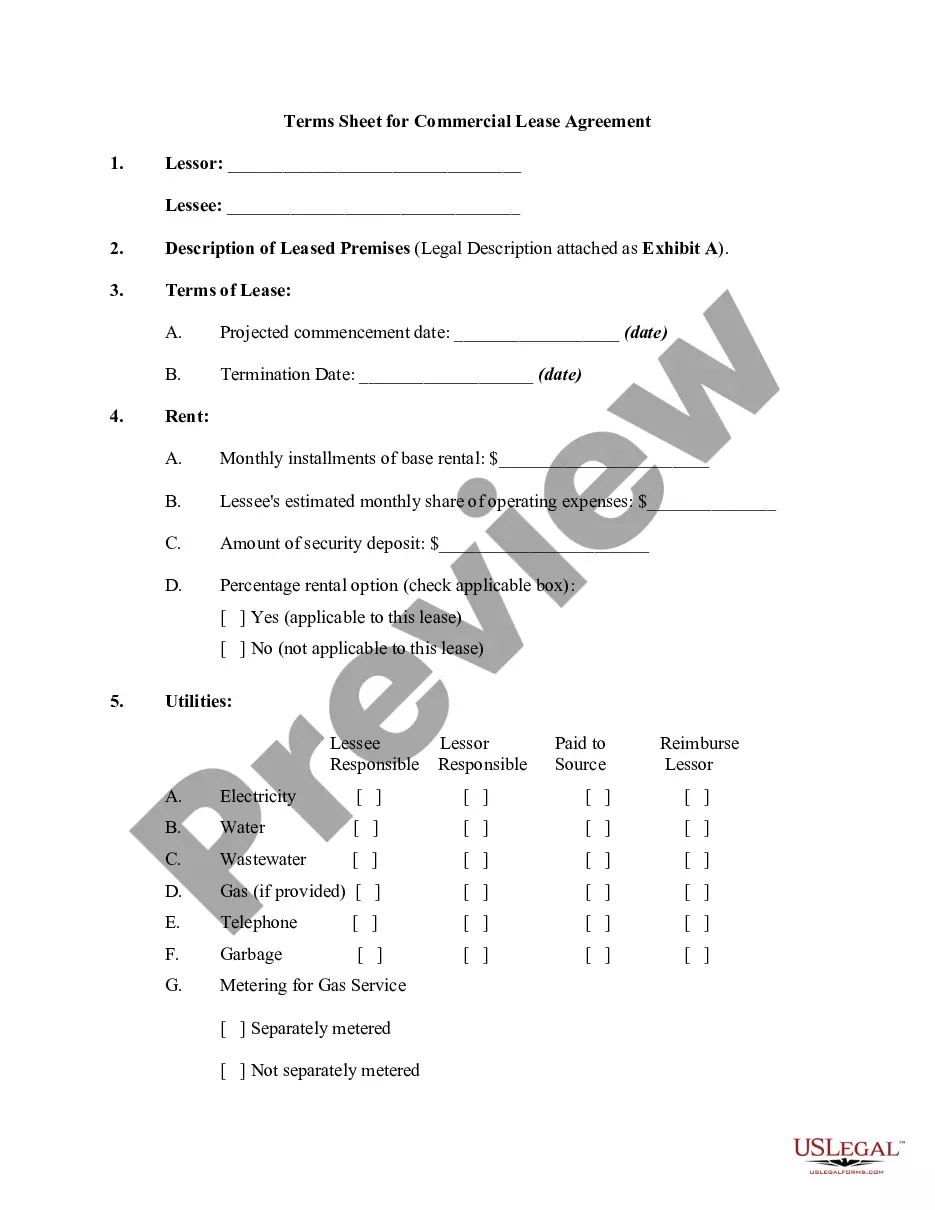

An example is when a facial recognition system is less accurate in identifying people of color or when a language translation system associates certain languages with certain genders or stereotypes.

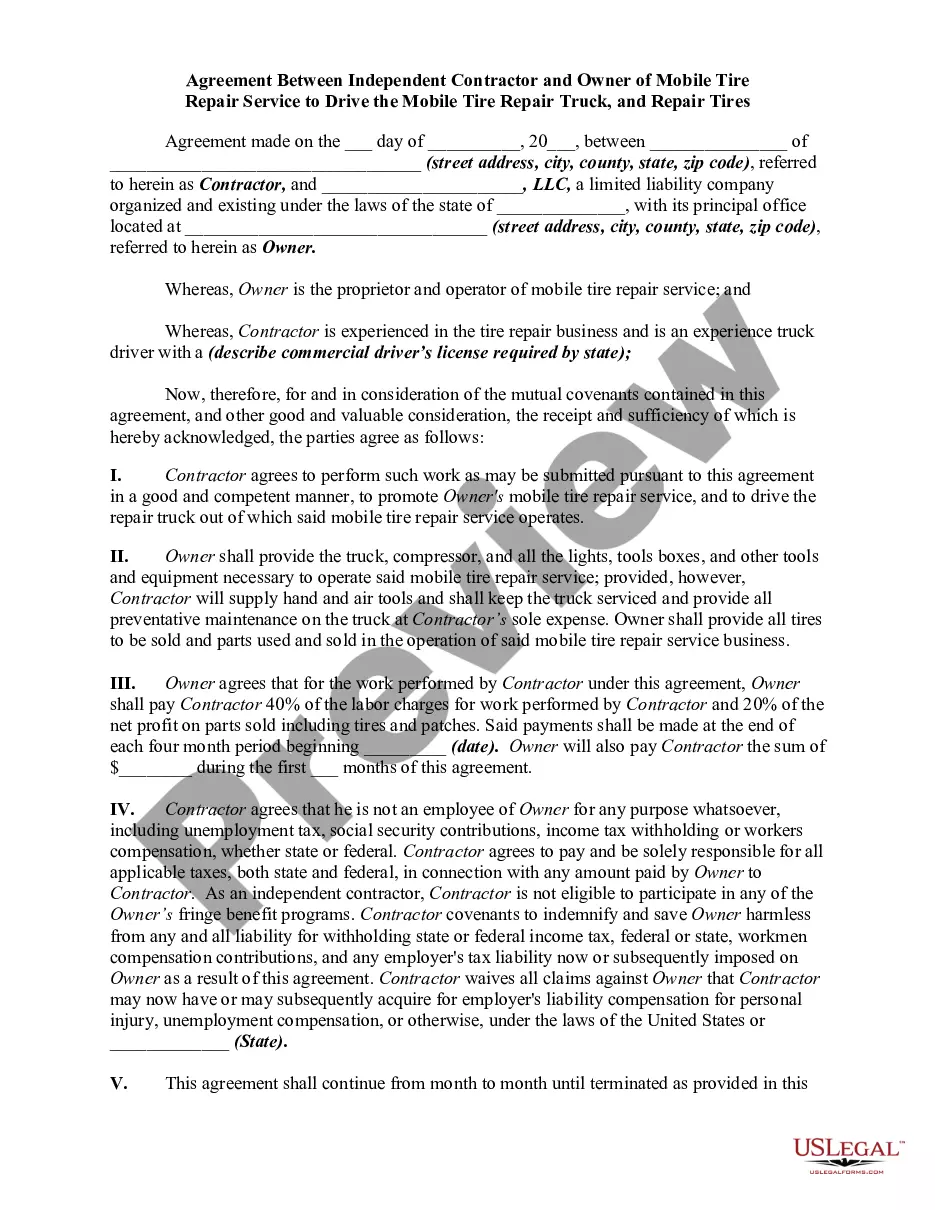

The technical guidance explains that an employer's use of an algorithmic decision-making tool may be unlawful because (1) the employer does not provide a reasonable accommodation necessary for a job applicant or employee to be rated fairly and accurately by the algorithm; (2) the employer relies on an algorithmic ...

For example, an organization's AI screening tool was found to be biased against older applicants when a candidate that had been rejected landed an interview after resubmitting their application with a different birthdate to make themselves appear younger.

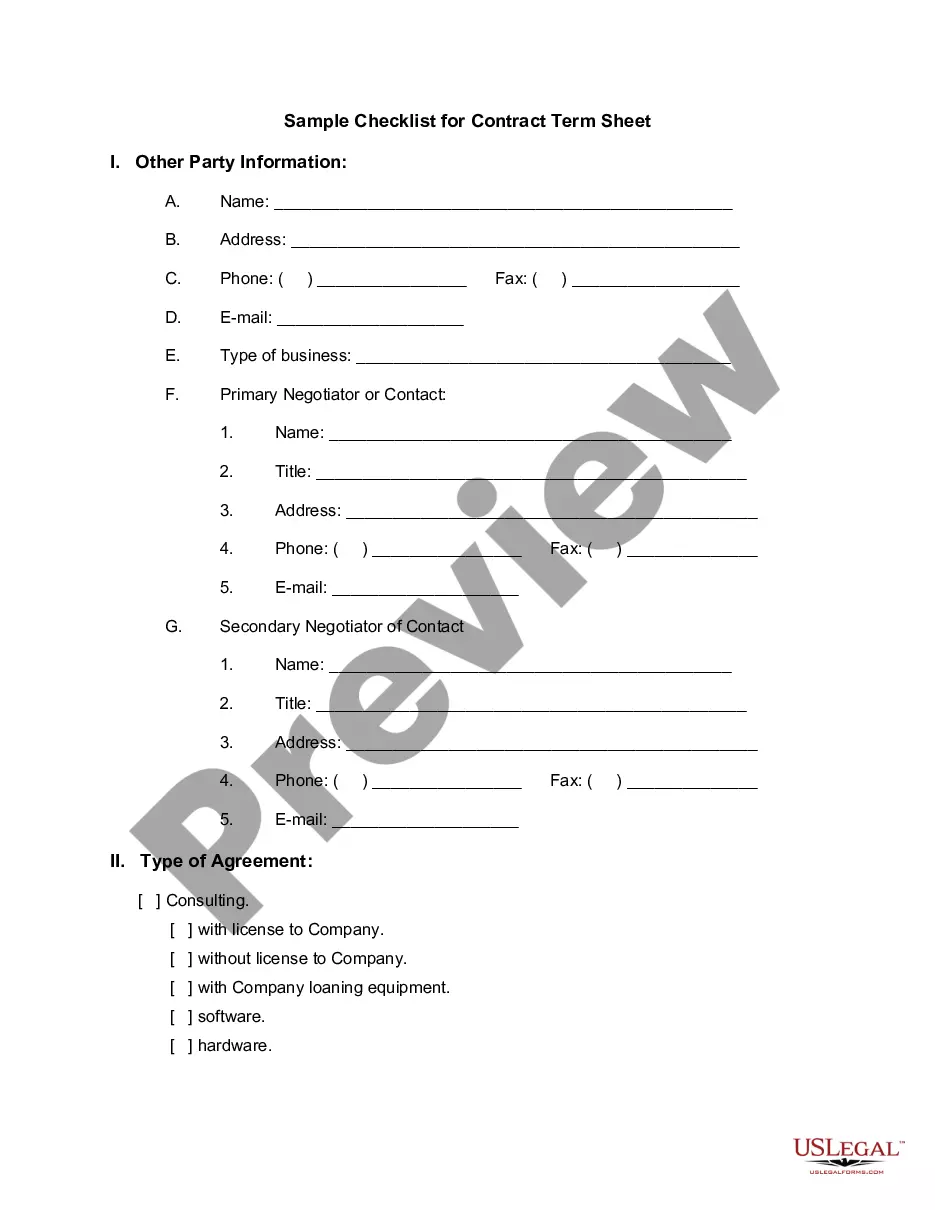

Congress has not yet passed any legislation related to AI in the workplace, but a few bipartisan bills have been introduced. The Technology Workforce Framework Act of 2024 ( 3792), sponsored by Sens.

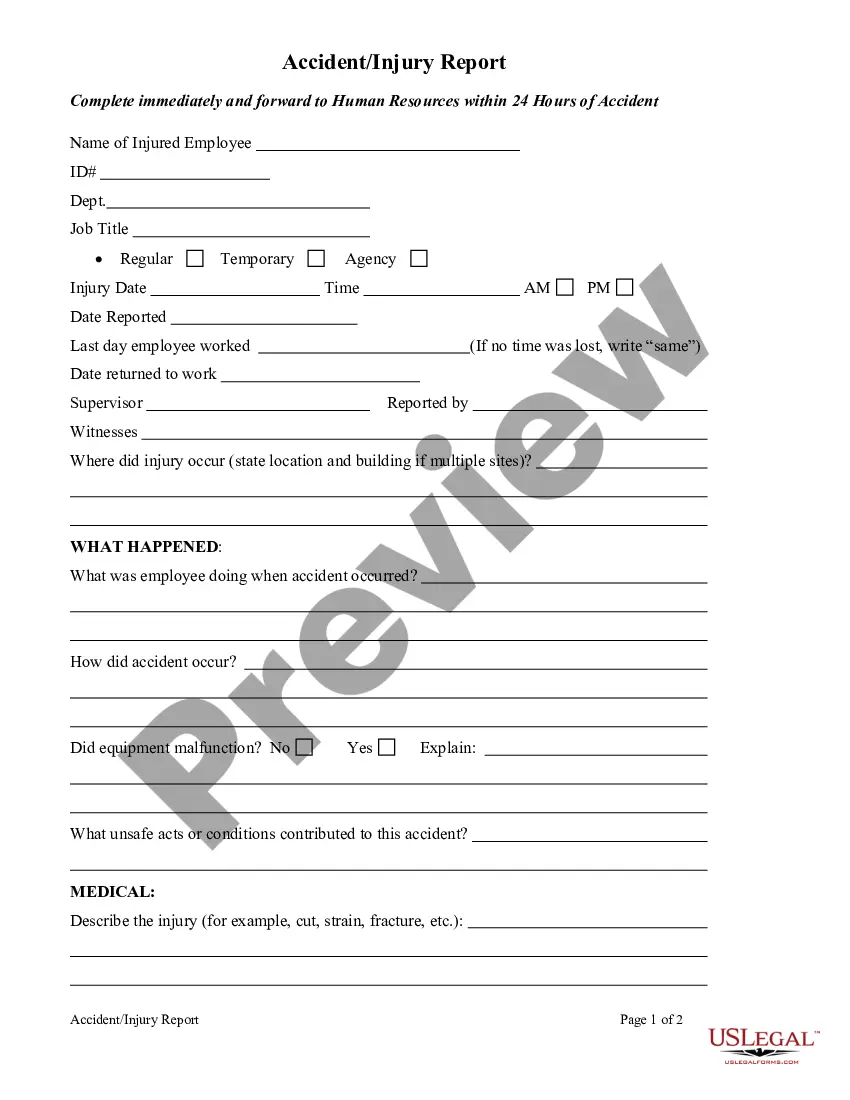

No, your employer cannot compel you to do anything. If you feel it's illegal, go to the labor board in your state or talk to the Feds. If you think it's immoral, call up an eager newspaper reporter. If you want to sue, see a lawyer for details.

Protecting Labor and Employment Rights: AI systems should not violate or undermine workers' right to organize, health and safety rights, wage and hour rights, and anti-discrimination and anti-retaliation protections.

An AI policy should inform employees whether they are required to seek approval before using AI on the job. Companies may also consider requiring employees to report to their supervisor anytime they use AI for a new purpose or for a new client or customer.

Developers and employers using AI must maintain their compliance with anti-discrimination legal requirements. Developers can minimize disparate or adverse impacts in design by ensuring the data inputs used to train AI systems, and the algorithms and machine learning models, do not reproduce bias or discrimination.